I worked on the unreleased VR music video FLEECE by Crystal Castles. Shaw Walters was the lead developer and Ronen V was the director.

Hello! Welcome to progress page for an upcoming Pittsburgh Artist-based music video!

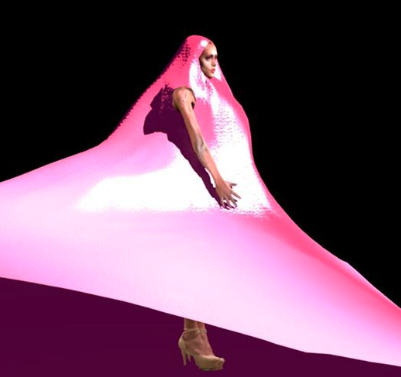

Check out my progress so far! This is Moon Baby’s motion capture data rigged up to a 3D scan of her with some simulated hair that doesn’t want to sit still:

We also captured facial motion capture data.

I also made this tongue in Maya:

Performance for Kool Keith

Performance #?

I have been performing for almost a year now, I lost track. On February 17th Projectile Objects and I created visuals for Cosmic Sound’s Altared I. I wrote about the process here.

Performance #3

On October 6th Anna Henson and I created the visuals for VIA 2017. It was a great honor to work with such talented musicians and the entire VIA crew.

Video by Anna Henson

Photos by Ryan Micheal White

Photo by a rando

Tests leading up to VIA:

Performance #2

Here is a shader I coded for Pittsburgh’s first algorave at Pierce Studios. Thanks to Kevin Bednar for putting the algorave together.

The music is Enth by Crystal Castles.

Performance #1

Photos from March 30th 2017 at Spirit.

Just The Right Height Photo by Lauren Goshinski

Me on visuals next to Lauren Goshinski, the organizer of the Femme night. Video by Sam Barton, without him I would have no documentation of this night.

Visuals are a modified shader from https://pixlpa.com/, I added sound frequency reactivity and live edited it with a webcam-pointed-at-projection induced feedback loop.

Elle Excess