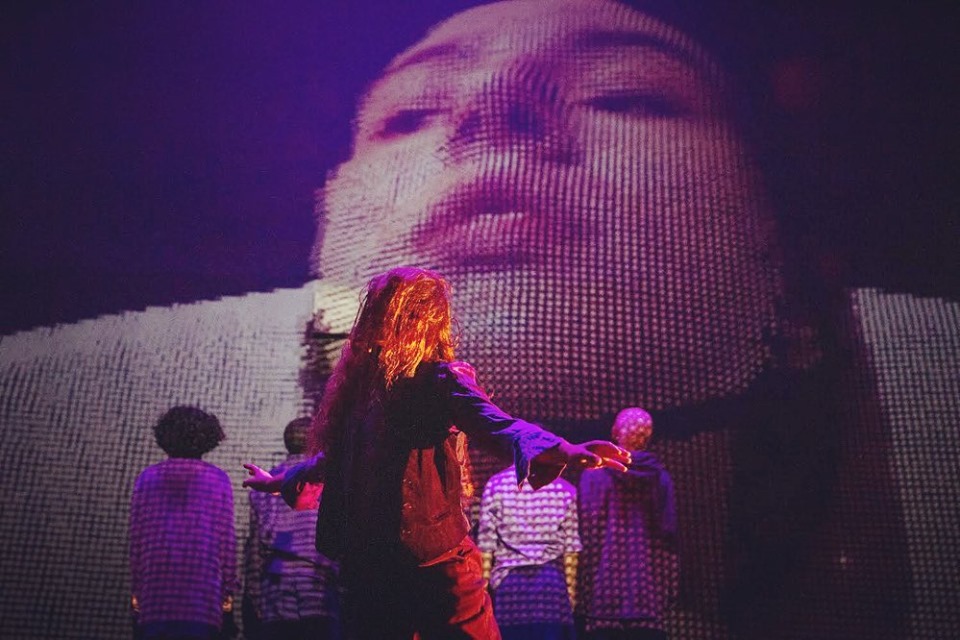

Work as an associative media designer for Michiyaya’s /wē/, a collaboration with Jess Medenbach.

photograph by Shannel Resto

photograph by Shannel Resto

I created some promotional material as well.

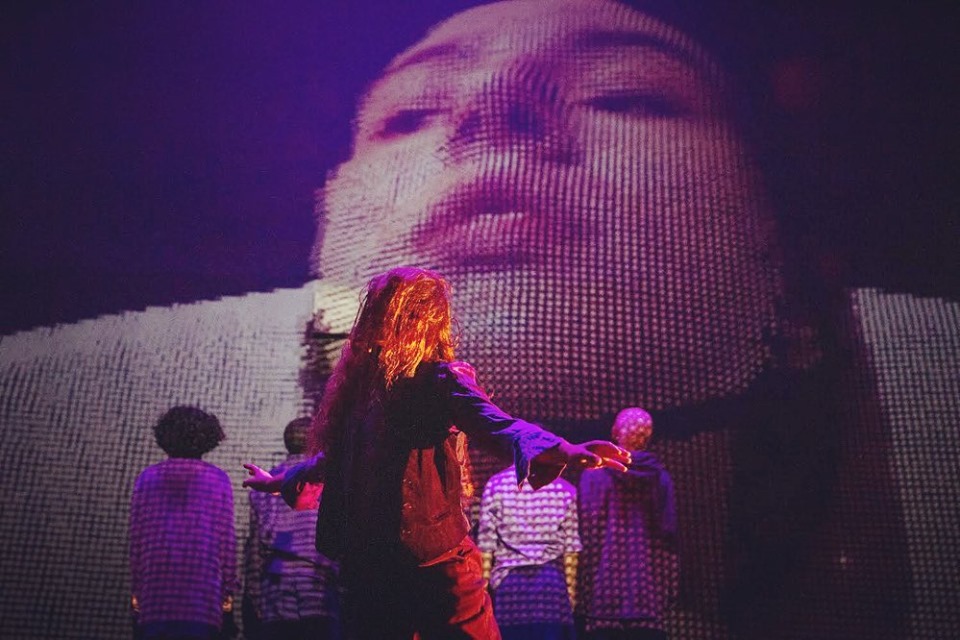

Work as an associative media designer for Michiyaya’s /wē/, a collaboration with Jess Medenbach.

photograph by Shannel Resto

photograph by Shannel Resto

I created some promotional material as well.

In collaboration with the performance entity slowdanger, I wrote custom software to add interaction to a 16 foot LED ring made by ProjectileObjects. He writes about the creation of the ring here. I also contributed a guide on theory of empathy machine, that was explored through workshops around empathy machine performances.

I was interviewed by Jennifer Nagle Myers about this piece. I am really proud of how this interview turned out, you can read that here.

empathy machine has been performed across the east coast. You can read more about empathy machine (and an interview with slowdanger) here and upcoming performances here.

I also created promotional material using OpenFrameworks and OpenCV.

eCosystem is an audio/visual (A/V) set with {arsonist} on audio and me on visuals.

Description:

Pittsburgh’s {arsonist} (Danielle Rager) and Char Stiles use live coded audio and visuals to create a simulated ecosystem. The flux of the ecosystem is reflective of the reactive nature of collaboration and the ability to dynamically define and modify rules in a live coding performance. The simulated ecosystem’s state feeds back to alter the music and the visuals, leading to the genesis and destruction of synthesized life forms and their sounds. Thus, the simulated ecosystem begins to mirror that ecosystem which exists in the space between Rager and Stiles’s two machines, a symbiotic exchange of human agency and technological determinism.

We have performed at the Mattress Factory in Pittsburgh, at DADS (Digital Art Demo Space) in Chicago, as well as hosted by Alia Musica also in Pittsburgh.

Projectile Objects, a Pittsburgh-based producer & VJ wrote about eCosystem along with the two other A/V sets at the Mattress Factory. You can read that here.

For booking email ecosystem@computerfaith.com

Confronted is a project in collaboration with Zaria Howard and Tatyana Mustakos.

We started with the question: What would happen if you were meant to face the AI-laced creations that you make? We through the process we recontextualized the sketch2face research, to reimagine the relationship between the sketcher and the sketched humans. This manifests as a sketch2face implementation in VR.

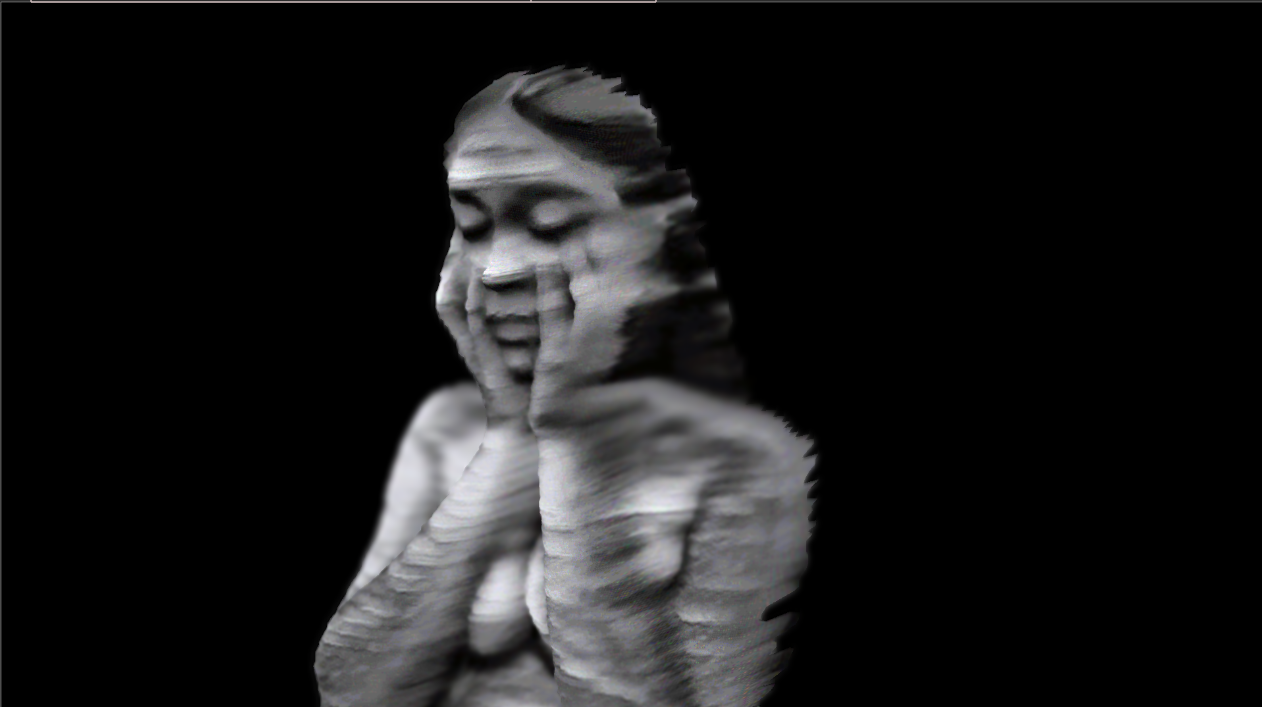

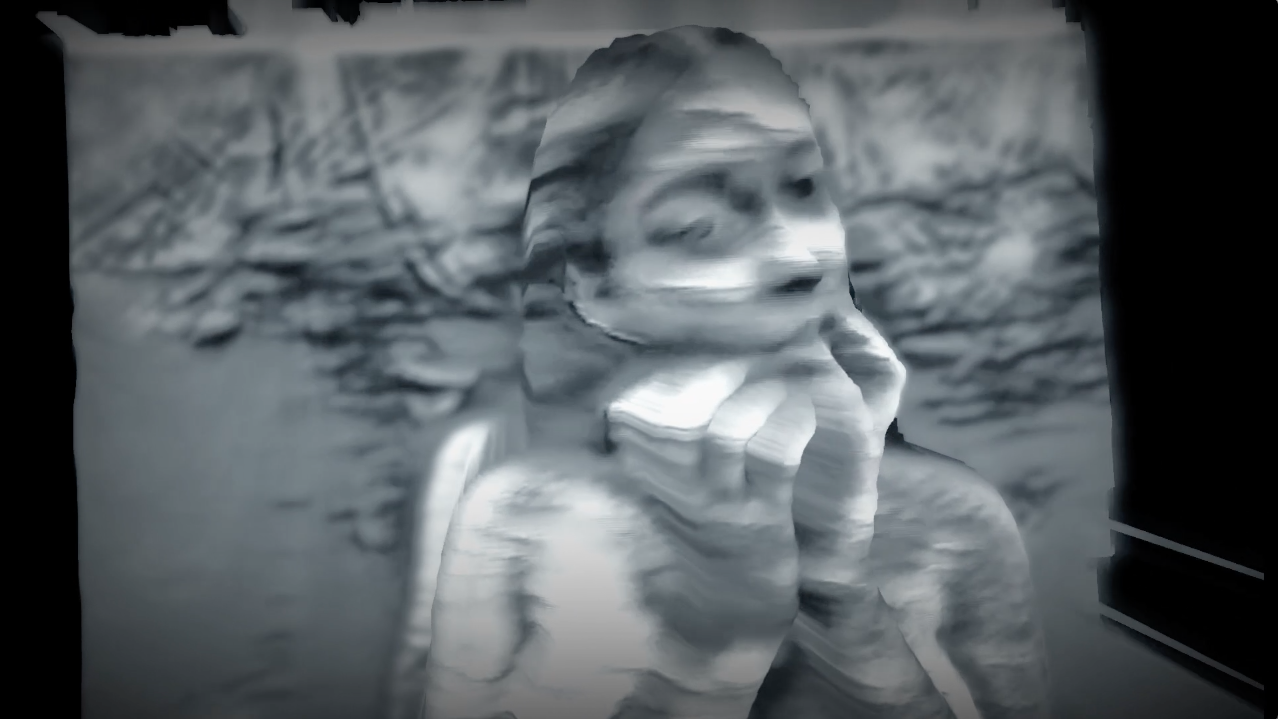

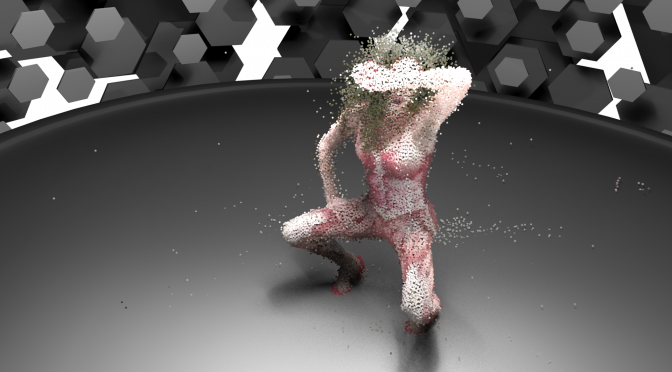

Devices is a 6-minute audio-visual meditation, a collaboration between snakeeyes and Char Stiles. The motivation behind creating Devices is the technologization and digital digestion of pain online. Social media is used to effectively “share pain”, and this is a theme explored in the work manifested as the digital filter, through manipulation, digitization and distortion of the physical body. This experience, as it is rendered as a video, can be easily shared and distributed online.

When Isabel wrote Devices, as a person who suffers from PTSD, she discusses the effect that this disorder has on her intimate relationships, as well as its effects on her physical body. The use of emerging media (volumetric filmmaking, machine learning, 3D scanning) to capture and animate Isabel’s body in such a way as to reflect the physical symptoms she has. The experience, when released, will hopefully raise awareness as to the complexity of this issue.

Devices was featured in the Internet Moon Gallery for the month of July 2019

You can see the exhibition here and read about it here.

snakeeyes (Isabel Vazquez), is a multidisciplinary Indigenous American artist/performer based in NYC/London. She performed her song Devices, while I captured it with 2D footage, 3D Kinect footage via Depth Kit and 3D multi-Kinect footage via CMU’s Panoptic Studio. The footage will be processed and digested, rendering out a video experience, which can be easily shared online.

I write about the process on charstiles.com/devices-wip. and charstiles.com/machine-learning-component-of-devices/

computerfaith.com is a website as a place to put all your trust in the computer. You can pray to the computer, you can marvel at the discrete math behind the computer, you can use its binary bathroom and explore the institute. I was interested in deconstructing the parts that makes up an algorithm, and how when we piece together this entire structure we are accepting the mistakes that anyone of these cogs could have made.

CharStiles.com/astroturf2.html

Astroturfing is defined as “the practice of masking the sponsors of a message or organization to make it appear as though it originates from and is supported by a grassroots participant(s)” The largest exporter of online astroturfing is the Chinese Government’s 50 Cent Army (五毛党). There are millions of posts made by low paid workers to persuade the public’s opinion in favor for the government. This piece takes all the questions in posts made by these workers. They are very subtle in their work, and a question is enough to make one wonder.

Read Digital America’s Journalist Kevin Johnson response to Astroturfing here.

Exhibited:

Digital America’s Issue no. 9.

Apple Pie: An American Art Show

10 March 2017

Group Exhibition at Goodyear Arts, Charlotte, NC

Shortlisted for Akademie Schloss Solitude and ZKM | Center for Art and Media’s web residency. The call for proposals on the topic »Blowing the Whistle, Questioning Evidence« was curated and juried by Tatiana Bazzichelli, artistic director of the Diruption Network Lab, Berlin.

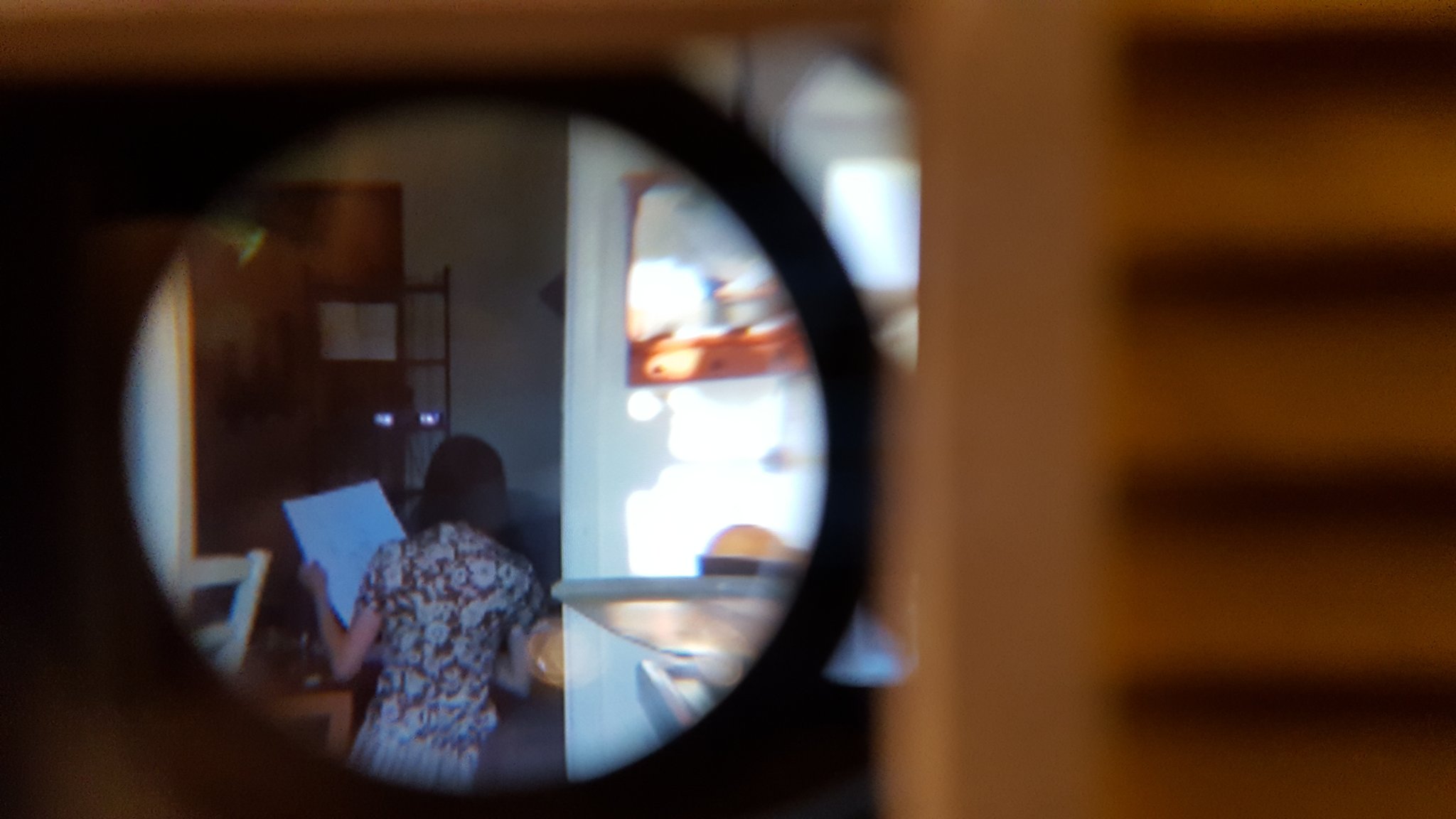

In collaboration with Alicia Iott, The Girls are Home combines VR with a traditional age-old toy, the dollhouse. Inside the dollhouse, an observer can peer through pairs of windows, to see a snippet of our unscripted daily life. By addressing the chasm that exists between cutting edge technology, material culture, and the domestic, plain-Jane realm, The Girls are Home works to bridge that gap.

The Girls are Home was exhibited at Art && Code: Weird Reality Symposium at the Ace Hotel, Pittsburgh PA October 6-9th 2016

This would have not been possible without the support from the Frank-Ratchye Fund for Art @ the Frontier grant, an endowment founded to encourage the creation of innovative artworks by the faculty, students and staff of Carnegie Mellon University.

This piece was also supported by The Henry Armero Memorial Award for Inclusive Creativity, The Girls are Home was exhibited in the the winners show at the Miller Gallery, Pittsburgh PA September 30th 2016. This grant is in memorial of a passed BCSA student, Henry Armero, who had a bright mind and was astutely multifaceted and interdisciplinary. The Armero Family has established the Henry Armero Memorial Award Fund to honor and further Henry’s creative ideals.

A music video for The Moon Baby in collaboration with Kevin Ramser, Alicia Iott, David Gordon and Guy DeBree. In this piece, a masculine and exorbitant piece of technology, the Panoptic Studio, is the instrument to a surreal expression of sexuality. As Moon Baby performs her song she transforms from IRL video to a glitching sensationalized cyber body. In each stage Moon Baby is digitally abstracted further and further from reality. Each version of herself still carries her essence but is falling deeper into the uncanny valley.

Clickthrough for making of video

Press:

In The Desert at 4am is a virtual space rear-projected on to a 4 walled room where the user can walk around a physical space to explore a virtual reactive environment. The environment is inspired by the feeling of infinity that one feels when they are alone in a large open space. Open spaces have historically been marked as places for contemplation. Although a vast space is projected, the user is still confined to a 13′ by 11′ box. The walls are limiting and at the same time they are the window into the environment. To counter but also highlight the duality the scale of the user’s steps are altered in the environment; one step in the physical world equates to 10 steps in the virtual world.